The chess24 hall of fame and Jeff Sonas’ chessmetrics

Chess24 has recently released the chess24 hall of fame, a series of videos where they attempt to rank historical players according to their competitive strength. For this purpose, Jan Gustafsson and Peter Heine Nielsen have made use of the chessmetrics rating system. I have no intention to criticize the series itself: it is always interesting to see two grandmasters commenting on the classics. However, their ranking, purported to be based on a more or less objective measure, has a few flaws.

The chessmetrics system was created by Jeff Sonas, a database consultant who apparently graduated with honors at Stanford University (according to his own business profile) and has plenty of experience working with statistics(1). He’s also a regular columnist for chessbase; an impressive CV. In this article I want to tackle one of the weaknesses with his system and, by extension, with the chess24 hall of fame ranking of historical players.

The problem of inflation

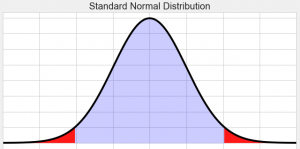

Sonas realized that the Elo system has been subject to inflation during the 90s(2). The Elo system is a relative measurement, like IQ measurements. This means that the rating of one individual is not independent from the ratings of other rated individuals in the pool. What Elo does is to measure the expectation of a player against other rated players. The coefficient assigned to a given player is meaningless; it is only the differences between players what matters(3). This makes the system vulnerable to inflationary (or deflationary) trends, which makes it useless for intertemporal comparisons.

Rating standardization

To solve this problem, Sonas made a simple but clever correction: he standardize the average rating of the players in a way that it remains the same across time(4). He simply kept the average rating of his sample constant. This is the same procedure that is performed with IQ tests(5). It is, in principle, correct, and probably a necessity for the actual Elo ratings. However, Sonas’ data was far from randomized. He worked exclusively with an elite sample of top events in the history of chess, and it is this top tail of the distribution of players what he was standardizing, rather than the distribution of chess players as a whole(6).

Sample sizes and rating standardization

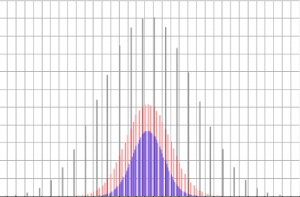

To understand the absurdity of this procedure, let us imagine we removed half of the players in the rating list by a random criterion. This would make about half of the players in the top-20 disappear. The top-10 of the new ranking would be similar to the top-20 of the original ranking, and their average ratings would also be similar. If we applied Sonas’ procedure, we would have to raise the average rating of the new top-10 to match the average of the original top-10. The entire distribution would be displaced to the right (let’s not forget that the Elo system is based on rating differentials, so these must remain the same after the standardization). The reverse would occur if the number of players suddenly increased: the number of elite players would increase proportionally and their average rating would increase accordingly.

Population growth and sample size

Of course, there hasn’t been any such sudden change in the number of players throughout history. However, it is clear that chess has become more and more popular over time, especially due to its proliferation in the eastern world. The number of players has increased dramatically, which makes the history of chess analogous to our example above. Since Sonas corrected the average throughout the entire time span, it doesn’t matter whether the change was sudden or progressive: the bias remains the same in the end.

Sonas could have solved that problem by adjusting his sample size proportionally to an estimated number of active chess players. That would require certain historical investigation, but at least he could have adjusted it to the world’s population. According to chessbase.com The estimated number of regular chess players today is greater than 600 million(7); that is more than a third of the world’s population in the year 1900. The Earth counted then with 1.6 billion humans; today there are 7.6 billion. Not only chess is more popular than ever, but the general population has increased by almost 5 fold. It is logical to suppose that the top of the chess rankings should be just as crowded.

Ilustration of the problem with the chessmetrics system

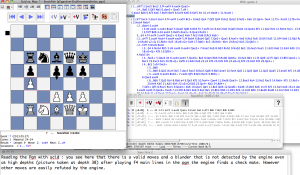

To illustrate this point, let us look at the chessmetrics ranking of January of 1900(8) and compare it with that of January of 2000(9).

| January 1900 rating list | 2858: Lasker | 2805: Tarrasch | 2798: Pillsbury | 2743: Janowsky | 2743: Maróczy |

| January 2000 rating list | 2868: Kasparov | 2810: Anand | 2796: Kramnik | 2761: Shirov | 2748: Morozevich |

The two rankings look almost identical, when in fact there should be at least 4 times as many players in the year 2000, for the same Elo range (only considering the general population ratio between the two years compared). The problem is that while pretending to adjust the average, Sonas was just matching the top spots and dragging down the true average of the distribution. The procedure seems like an arbitrary thing to do, in particular when Sonas himself claims to make the correction in order to prevent inflation(10).

Dubious rankings of chessmetrics

This error generates more than a few absurdities. For example, Sonas ranks Pilsbury and Maroczy in the 10th and 11th places of his historical ranking by 3 years peak, while Jan Timman is 42nd, Bent Larsen 48th, Artur Jussupow 55th and Judit Polgar is in a modest 73rd place(11). I don’t deny the accomplishments of the former, but we must acknowledge, for the reasons explained above, that they were achieved in the face of a much weaker opposition. The latter were quite a bit superior in terms of percentile, but they get demoted by Sonas for no particular reason. In the chess24 hall of fame series based on the chessmetrics system, we find the same kind of aberrations: Efim Bogoljubov above Levon Aronian; Harry Pilsbury above Boris Gelfand; Mihail Chigorin above Jan Timman; Symon Winawer above Peter Leko and so on (12).

Are modern players that much better?

It is worth noting that I am not talking about technical ability. It is evident that the average player today is substantially better, technically speaking, than the average player 100 years ago, due to technological advances and availability of information, plus the average IQ has also increased. I’m discounting all of that, because the advantages enjoyed by all competitors are irrelevant to the Elo system (who as we know is based on differentials). With a proper standardization, the true average of the distribution would have the exact same rating by definition, even if the average modern player was technically “superior”.

One could lament that a fair standardization would trash the classical players that we all know and love. This assumption is partially correct, but we must take into account that the Elo system is a measure of competitive strength. Just like it cannot measure raw technical ability, it cannot measure the merit of the pioneers who lead the way towards the progress of chess. For that purpose, we would need to define the criterion more precisely and use other types of measurements.

Are classical players that much worse?

So are classics such as Lasker, Capablanca and Alekhine patzers compared to modern players? Probably no. First of all, if for example we increased the sample size by 4, we can expect the ranking 1 player prior to the increase to become, in average, top-4 after the increase, and remain ranking 1 in 25% of the cases (as some simple mathematics can confirm).

In addition to that, the aforementioned players are outliers in the history of chess and very dominant in their respective times. It is the lower rated players who would suffer by comparison. A case could also be made that in times where our game was less popular, it was only the most talented who were attracted towards and pursued a career in chess. This is quite likely, and that is why a fair estimate of the popularity of chess at different points in time is necessary in order to standardize the rankings and make a fair statistical comparison across time.

Conclusion on chessmtrics and the chess24 hall of fame

Does this mean that the the chessmetrics system could be the ultimate word for a historical ranking provided that this issue was amended? Is it fair for comparing contemporaries? Is it better than Elo? These are some of the questions we will explore in next articles. My belief is that Sonas is in the right track, at least in spirit, but his system if still far from perfect. As for the chess24 hall of fame series, it should probably be taken as a way to familiarize ourselves with the classics and enjoy watching good games while learning chess history, rather than as any kind of authoritative source for the classification of historical players.

References:

1) http://www.sonasconsulting.com/about/

2) https://en.chessbase.com/post/rating-inflation-its-causes-and-poible-cures

3) https://handbook.fide.com/chapter/B022017 (see section 8)

4) http://chessmetrics.com/cm/CM2/Formulas.asp

5)http://www.psychology.emory.edu/clinical/bliwise/Tutorials/SOM/smmod/scalemea/print2.htm

6) See 4)

7) https://en.chessbase.com/post/che-redux-how-many-people-play-che-

8) http://chessmetrics.com/cm/CM2/SingleMonth.asp?Params=190020SSSSS3S000000190001111000000000000010100

9) http://chessmetrics.com/cm/CM2/SingleMonth.asp?Params=198520SSSSS3S000000200001111000000000000010100

10) See 4)

11) http://chessmetrics.com/cm/CM2/PeakList.asp?Params=198520SSSSS3S000000000000111000000000000010100

12) https://chess24.com/es/aprende/video/video/hall-of-fame-the-50-greatest-chess-players-of-all-time/intro-50-great-players